0b4k3 – ow (2022-edit) / CUE [Archive]

XR live performance “CUE” performed at Shibuya PARCO by 0b4k3 and phi16 at NEWVIEW FEST 2021 OPENING PARTY.

The performance that straddles the layers of real space and virtual space surprised not only the followers of XR content but also STYLY engineers and artists.

The creators 0b4k3 and phi16 explain how that “CUE” was born and how it was made.

Then, with PARCO Ando, the STYLY MAGAZINE editorial department will delve into it.

— Thank you for your time today. May I introduce myself first?

Ando: I’m Ando from Parco. Thank you.

Parco Digital Promotion Department

Joined the company in April 2013. In charge of store promotion related work at Shizuoka PARCO and Utsunomiya PARCO. Since September 2017, he has been assigned to the current department and has been in charge of digital-related work including XR since then. Shibuya PARCO “SHIBUYA XR SHOWCASE” and other measures to promote the use of XR in PARCO.

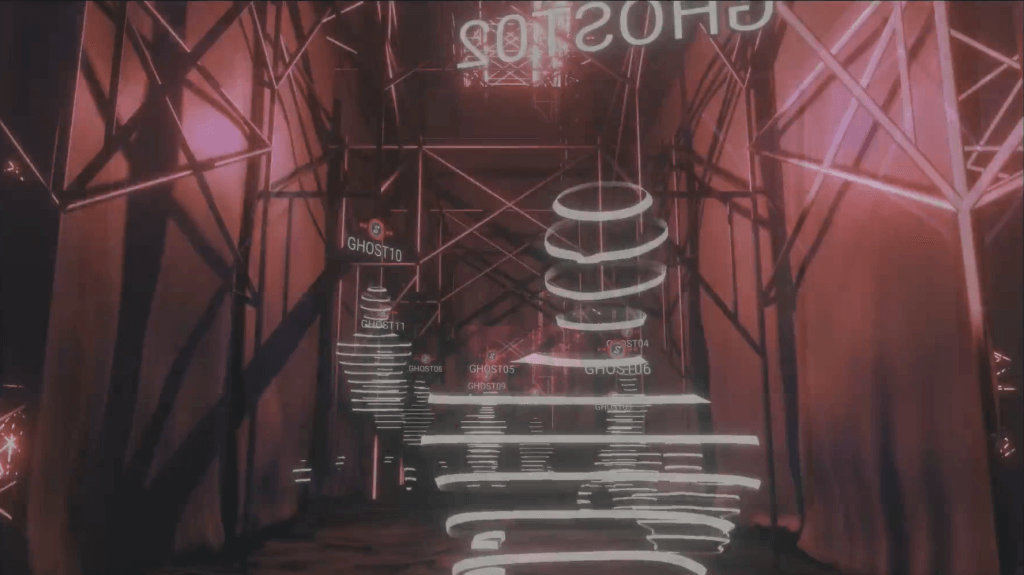

0b4k3: I’m 0b4k3. I usually host and direct a VR club called “GHOST CLUB”. Recently, I have a relationship and am doing a direction business for various projects. Thank you.

VR club “GHOST CLUB” sponsorship, Director. In recent years, the direction of some venues of “SANRIO Virtual Fes in Sanrio Puroland” held in VR SNS “VR Chat” and the direction of 2DMV and VRMV of “FORGOTTEN [Vocal: ermhoi (Black Boboi / millennium parade)] / MONDO GROSSO”. Was in charge of.

phi16: It is phi16. Basically, I like what I want to do, and recently I’m a person who often makes things related to a certain VRChat.

A person who writes the code. I often touch graphics related things.

Participated in “CUE” as an artist. I like abstraction and structure.

“CUE” was born from the situation and chance

— I would like to ask you how “CUE” was born.

0b4k3: First of all, by receiving the “NEWVIEW AWARDS 2020 PARCO PRIZE” award, it was decided to co-produce the work with Mr. PARCO. Production began in earnest around the spring of 2021, but the initial request from Mr. Parco was to produce an AR work.

The PARCO PRIZE winners are given the right to co-produce an AR work with PARCO, but the work “TECHNOVINEGAR” I submitted to NEWVIEW AWARDS was a VR work, wasn’t it? So, I didn’t expect to receive this award, which would make an AR work, and when I received it, I had mixed feelings of joy, surprise, and anxiety.

I wasn’t really interested in AR to the extent that I thought so, and I had no experience in the first place, so I had a hard time at the planning stage. I wasn’t sure what to make.

Besides, I think that it is very important to make adjustments locally for the production of AR works, but at that time it was in the midst of corona, so that was not realistic, and I had to think about the project while imagining the exhibition place. was. In such a situation, I asked myself, “Is this interesting?” “Is it true?” And the progress was not very good. That kind of situation has been going on for about three months.

As expected, it will not be ridiculous as it is, so by adding not only the AR element but also the VR element that I have been familiar with so far, I think that it will be more interesting and it will be easier to produce, “AR It was decided to review once from the original project of “making a work”.

After reviewing the plan, I have gradually come to see the completed form that I am aiming for. Around the same time, it was almost decided that the exhibition place of the work would be “ComMunE” in Shibuya PARCO, so at this stage it was finally ready for completion.

Also, when I usually make a work, I often do the first 0 → 1 by myself, but I am doing the work of making 1 → 10 while thinking about various people, so this production I thought it would be nice if I could do it with someone, and phi16, who is working with me once in a while, seemed to be vacant, so I decided to call out at this timing and have him participate. It seems that it wasn’t actually available at all (laughs)

phi16: (laughs)

0b4k3: At that time, I seemed to be very busy with another matter, but I invited him while pretending not to see it. Fortunately, I got OK.

When it was decided to work with phi16, we made the project more concrete. I did the “combination of sound and production” that I had done so far on ComMunE, which is the venue, and I decided to call it “XR” because it involved VR rather than just an AR-like work. For example, I thought that we could do something different as our interpretation of the expression “combination of virtual and real” that has been tried in various ways. The result is “CUE”.

— It seems that the result of combining various phenomena and situations was “CUE”.

0b4k3: right. In the end, it seemed that it was faster to combine what I always do, or that it was more cohesive.

phi16: The format is based on the usual one, but I think I was motivated to do something interesting / out of the ordinary, or something that I couldn’t do in the usual place.

-It’s a very interesting story that it became “CUE” when it was produced at the place where it was requested or prepared. How did Mr. Ando feel that “CUE” took shape?

Ando: As Mr. 0b4k3 said earlier, at first I also got lost. What kind of chemical reaction will occur if the creator who won the PARCO PRIZE and the location of PARCO are combined? By the way, I started by proposing to 0b4k3 to make an AR work.

However, apart from that, there was a theme that my team wanted to do this year. Until now, we have made a connection to the virtual, “Let’s make a point of contact with the virtual by working hard on the” announcement “and” directing “that the AR of a great creator is on display!” However, this time I wanted to create a work in which real and virtual are inevitably connected.

So when 0b4k3 gave me a suggestion for “CUE”, he said, “By the way, at first I was thinking of doing something that was connected to real and virtual, but I forgot.” I felt like it.

It happened to be proposed by Mr. 0b4k3, but I think I was very lucky to receive a proposal that was very close to what I initially aimed for.

Until ComMunE was virtually connected

— I heard that CUE’s production was a big coincidence.

Originally, GHOST CLUB, which 0b4k3 has been doing for a long time, is interesting because it is a place that has a very realistic texture while being virtual, but on the contrary, it is based on the idea that I want to superimpose a virtual place on a real place. Was it from? I am worried about that.

0b4k3: As a matter of fact, I had to publish my work somewhere in the PARCO store that actually exists in the planning of this book, so regardless of what I want to do or not. , It had to be a work that overlaps reality and virtual.

Fortunately, the space of ComMunE itself, which is the venue on the real side, was very good. I couldn’t go to the site, so I got some photogrammetry data for confirmation, and when I tried putting lighting and a DJ booth on the model data, it felt pretty good. It’s so nice that it’s already completed.

However, at that stage, the idea of ”this” had not yet come up. Meanwhile, phi16 liked the “single tube” in ComMunE so much that he was wondering if he could somehow incorporate it into the production.

So, why not do something like projection mapping for a single tube? I talked about something like that, but what happened after all?

phi16: The alignment is difficult.

0b4k3: Right, right. Alignment is also difficult, and there are various other difficult factors. So, I forgot who said this, but it became a story like “let’s hang a cloth” …

phi16: When I was looking at various photos of ComMunE, I saw cloth hung at past events. If it’s a cloth, can you hang it if you ask? It was supposed to be.

0b4k3: So, if you can hang a cloth, let’s hang the cloth vertically … Isn’t it all right if you hang it with a van? It became a story like that, and it actually got better than I expected, and at that time I thought that I would have won.

Ando: You wrote it on Twitter (laughs)

0b4k3: You wrote it (laughs). Since ComMunE is already a completed space, it’s weak just to reflect the light. By hanging a cloth on it, it becomes a space that is “ComMunE but not ComMunE”, and then the cloth and the steel frame are synchronized with VR in the real world. By doing so, you can bring the connection between reality and virtual as a structure, so you do not have to forcibly reproduce ComMunE itself on VR, you do not need to use photogrammetry, and it feels like it is missing in a directing sense. It was there.

— The single tube had a very good taste, didn’t it? It seems that a single tube appears or becomes a little inconspicuous, or the light hits or does not hit. That was just cool.

Ando: I decided to use ComMunE for another case this time, but as Mr. 0b4k3 said, there is an icon called “single tube” that connects virtual and real, and there is VR information. I felt that the relationship between real and virtual became insanely strong by adding a node called “cloth” that conveys. On the day of the event, when I saw the delivery where the switcher switched between real and virtual in a nice way, it was these two that the video was in a world where the relationship between real and virtual coexisted vaguely without any discomfort. I wonder if there are two icons. Yeah, the cloth was really good.

Real and virtual overlapping production

— Please tell us about the technical aspect of the production that overlaps real and virtual in “CUE”.

phi16: That image projected on the spot on the day is not running on STYLY, but the output from the application I built with Unity. I want to remotely control fine control and how it looks on the screen. I prepared various things to create a situation where I could operate without going to the site.

The most technical concern was synchronization. The most uncertain part was whether multiple projectors would play at the same timing. It’s a production that is made to match the music exactly, so it seems to be disappointing if it shifts. I don’t know exactly because I haven’t been to the site, but at least as far as I can see the distribution, the monitor in front of me is a little off, but I think it’s almost okay. ..

— What was the reason you didn’t use STYLY in the field?

phi16: I learned at the end of production that STYLY can also send HTTP requests, but I thought that would not be suitable for instant switching. I wanted to control the switching of the projection camera and the on / off of the sound in the field, but in that case, rather than checking regularly with an HTTP request, it is easier to connect with WebSocket all the time, so via WebSocket So I made a program that connects to my server so that I can play with it in real time.

Thanks to that, the reaction came back in no time and I was able to confirm the abnormality at the site immediately, which greatly contributed to the peace of mind of the day.

— Did you have any episodes that you had a hard time in the field?

phi16: In the distribution, STYLY’s VR scene and the on-site camera image were mixed and projected so that they overlapped, but that was adjusted on the morning of the day. I had the camera of the mobile terminal take the image seen from the position of the camera at the site, received it, adjusted it to the exact position on Unity, and updated the scene again. That’s why the angle was decided on the day, and I adjusted it with feelings like “Is this really suitable?” Or “I don’t think it fits anyway.”

There was a story that I couldn’t know where to put the camera until I could put it on site, and I often checked other things, so I remember that the operation on the morning of the day was quite complicated.

Ando: While I was doing the event as part of the opening party of NEWVIEW FEST, I was affected by the change in the position of the stage due to the overall progress, and I thought that the event was quite difficult. .. What can I do if I notice something different on the day? I talked about something like that on the spot, and I consulted with 0b4k3 if it seemed to be a little useless.

phi16: I felt that perfection was impossible.

0b4k3: You were reminded of the difficulty of the site.

Ando: When I was doing this kind of live content for the first time, I couldn’t really wash the point of view that I had to keep in mind. There were quite a lot of things that were decided at the meeting two days ago, as if there were various things happening on that day.

0b4k3: right. It seems that there were quite a few elements that I couldn’t grasp.

Ando: It was two days ago that I finally fixed the session.

0b4k3: Actually, I was planning to go to the site on the day, and I was planning to help prepare the venue by doing the current situation until just before, but since Omicron became popular at a stretch, both I and phi16 made this time. Discont from Psychic VR Lab, who supported me, can no longer go to the site. In the end, the main production members were no one at the scene except Mr. Ando. It turned out a week before the actual production.

As a result, there were some parts where we had to change the content of the originally planned work. As a production on the real side, while wearing a VR device in the cloth and experiencing the production performed on the VR side, for those who are not wearing the HMD in the field, the production on the VR side projected on the cloth The original plan was to have people who wear the light synchronized with the VR device and the people who wear the VR device appreciate the “state” itself. However, the infection situation worsened and it became impossible to attract people to the site, so it became impossible to do so.

As a result, it became close to the idea that I personally had regardless of the project, “Only my avatar exists in an unmanned real-life venue, and I will perform live in that state.” Rather, you accepted the change favorably in that regard. Of course, it wasn’t all that convenient. I was keenly aware that the site where many people are involved is unstable like this, and there are many things that must be selected and judged immediately before.

— I think it was good that there were no humans at the live site and the avatar named “GHOST” was swaying.

0b4k3: I think it’s not good to say this kind of thing, but I’m honestly glad I could do it unattended (laughs). As Mr. Ando said, the site was fluttering all the time, and the main production team couldn’t make adjustments at the site as expected, so in that situation, if there were customers, it would probably go well. I think I couldn’t do that … Also, in terms of phi16’s system, if we include customers …

phi16: When VR comes in, the synchronization between sound and video is even more suspicious …

0b4k3: It was a very unstable situation and I had to deal with it remotely, so I didn’t want to increase the troublesome factors as much as possible, and I’m glad I could do it in that way as a result. ..

Ando: I agree with this. In the first place, there is also a story that the power consumption of the venue will be dangerous if 5 VR devices and 5 PCs are added. Fortunately, many things have been cleared. However, even without it, when I saw the final video, I felt like “This was the correct answer.”

“CUE” was born because it was STYLY

— Finally, I would like to hear your unrelenting opinions from the creator’s point of view, such as wishing that STYLY could be used in this way.

0b4k3: This time, I was trying to “produce using STYLY” from the beginning, so I didn’t have any complaints or give up.

phi16: Well, there was a story like “CUE was born by chance” earlier, but rather than by chance, it’s a form of “I tried to do as much as I could while the format was decided”. On the contrary, it is also inevitable. So if you do it in a different place, you can do something different, and that’s probably better. I think “CUE” is a look that doesn’t look like STYLY’s work, but I think this is a natural expression as a result of exploring the category of what STYLY can do.

0b4k3: I think “CUE” was born because the exhibition place of the work was STYLY. If I had done it with VRChat, I would have made something different. It would be a lie to say that there is no stressful part to have to make something according to the platform, but I think that there is more kind of influence from that platform. I think that’s a great thing for creators.

phi16: On the contrary, it is often fun to put a little shackle on it.

0b4k3: I also like tied-up play, so in that sense, I really think that this project was very good. After all, I think the best thing about STYLY is its high readability. It is a very good point to be able to experience the work from various devices such as the Web, smartphones, Quest, PCVR.

phi16: After all, it’s so big that it’s supposed to work on a portable device, so I was able to proceed in the direction of efficiently producing good pictures with simple lighting.

0b4k3: Right, right. In this story, “CUE” has only one real-time point light, isn’t it? Only one light illuminates the space, and that’s the amount of information.

I think that VRChat will put the light more luxuriously, but thanks to such a process, I have to support multi-platform, I draw various things, and as a result, only the essence remains. I think that is why “CUE” was born.

phi16: I was originally the type of person who made things according to the place, and I wanted to do anything interesting, so it was good that it was properly expressed as an expression this time.

0b4k3: Right. I’m glad I was able to do an XR live.

phi16: XR live called XR live.

-It was exactly XR live.

0b4k3: I was talking about “XR Live” because I wanted to do an interview with phi16.

Ando: This time, we first made a virtual ComMunE with a cloth attached, then put a cloth on the site accordingly, and then the customer is in the virtual side, which is a master-slave relationship where the virtual is the main body and catches up with the reality. I had the feeling that I had never seen an XR live with. It was also fresh and interesting.

phi16: That’s exactly what I talked about (laughs)

0b4k3: I have the impression that most of the XR live concerts so far have been made from the standpoint and perspective of the real side.

phi16: It’s like a quote from before the VR era.

0b4k3: right. So, it was interesting for us to make something like this when a person who is usually active mainly in VR makes something that interferes with reality.

However, since it was an attempt I had never done before, I was worried that I could immerse people on the real side properly, but I saw the reactions of the people who were actually watching it on the spot. I was relieved that it was done well, and I wondered if I could make a good attempt. It may be an exaggeration to say that it is new, but I think I was able to present a different form of XR live.

The XR live performance “CUE” was created by mixing the creative of 0b4k3 and phi16 who were active on VR and the two different places of Shibuya Parco who actually have a place, VR and AR, It is a unique work that sublimates “XR Live” to a new dimension in the sense that it crosses various contexts such as reality and virtual.

Even if you are familiar with VR but not familiar with STYLY, let’s experience “CUE” which has been remade as a new archive.

How to experience a VR scene

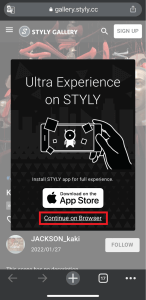

If you are accessing this page from a smartphone, please click on the “Experience the Scene” button (*If you are experiencing the scene on a smartphone for the first time, please also refer to the following instructions).

After clicking, the following screen will be displayed.

If you have already downloaded the STYLY Mobile app, please select “Continue on Browser”.

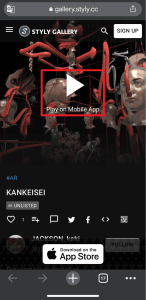

You can then select “Play on Mobile App” to experience the scene.

If you have an HMD device, click the “Experience the Scene” button from your PC (web browser), then click the VR icon on the scene page.

Download the STYLY Mobile app

Download the Steam version of STYLY app

https://store.steampowered.com/app/693990/STYLYVR_PLATFORM_FOR_ULTRA_EXPERIENCE/

Download the Oculus Quest version of STYLY app

https://www.oculus.com/experiences/quest/3982198145147898/

For those who want to know more about how to experience the scene

[VR] For more information on how to experience VR scenes, please refer to the following article.

![[Summary] How to experience STYLY scenes VR/AR(Mobile) / Web Browser Introduction by step](https://styly.cc/wp-content/uploads/2020/04/スクリーンショット-2020-04-10-12.53.04-160x160.png)