STYLY recently held a Hackathon!

STYLY Hackathon is an event where members of Psychic VR Lab, the developer of STYLY, create content according to a theme within a certain timeframe.

The overall theme of this year’s Hackathon was “Content that Generates Buzz.” Participants were divided into five teams. Each team had to brainstorm how to create content that would generate a strong response and produce their ideas within a short, two-day period.

Using STYLY’s new “Modifier” feature, Hackathon participants were able to add animation, interaction, and other effects to their assets in STYLY Studio. For more information on modifiers, please see this article.

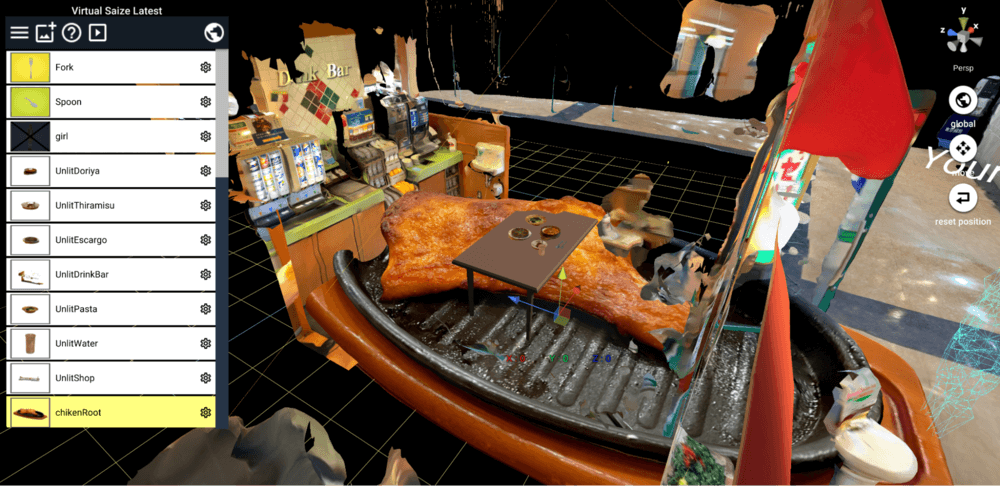

In this final Hackathon article, we summarize the production process and TIPS of Team E “Virtual Saize Production Committee.”

Team E realized their attempt to “reproduce Saizeriya in a virtual space” through the successful use of photogrammetry in their production process. Photogrammetry refers to a method of analyzing and integrating digital images by photographing the subject from various angles to create a three-dimensional computer graphic (3DCG) model. This article provides some interesting insights into the use of photogrammetry in XR productions. We hope you enjoy it!

“We Will Re-create Saizeriya in a Virtual Space and Develop It Together”

Since the theme of this year’s Hackathon was “buzz,” Team E began by thinking about what kind of content would generate buzz. At the time, there was Internet buzz about a girl who is happy at Saizeriya, a popular Japanese chain of Italian restaurants, so the team decided to use Saizeriya as their theme.

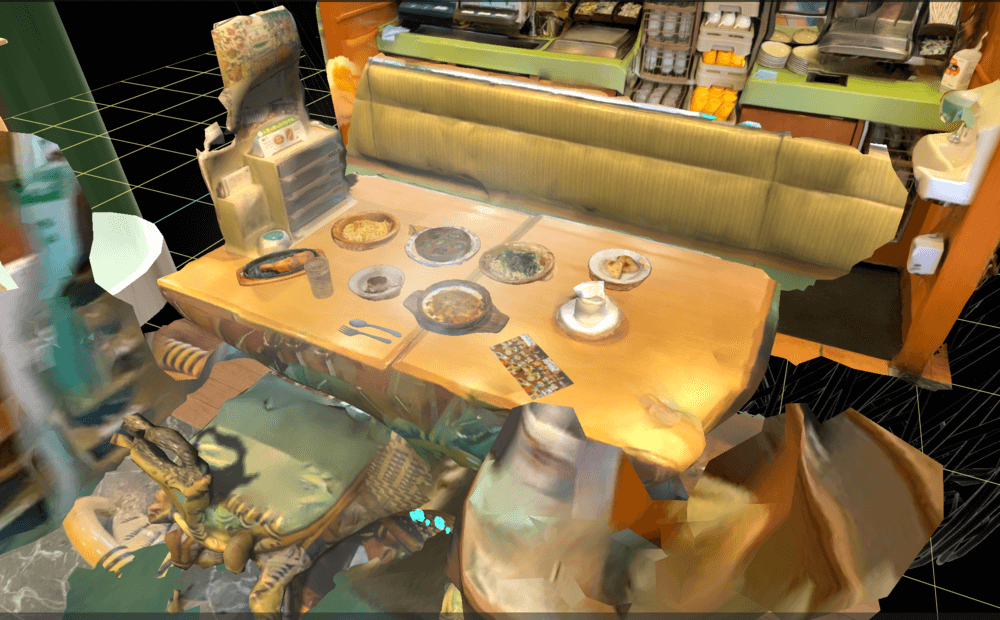

Next, they decided to base their theme around user-participation content, where photogrammetric models could be collected from users to attract more people to the site. The result is a virtual Saizeriya restaurant, with both the food and the restaurant’s location photographed by photogrammetry.

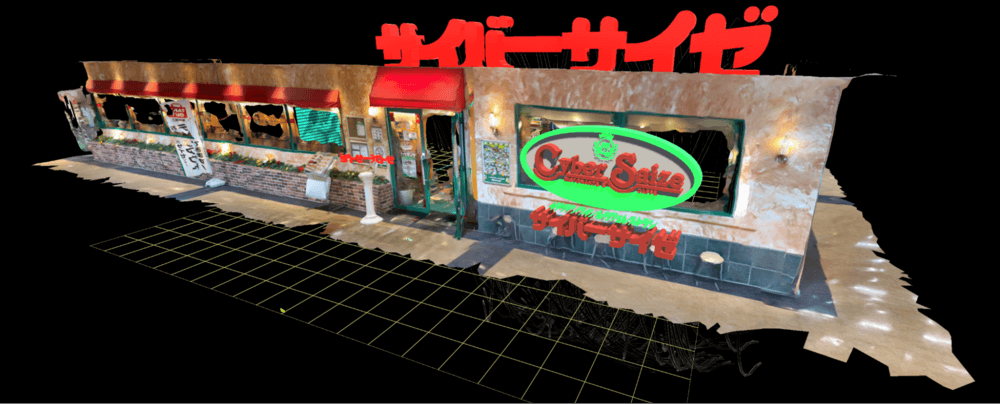

Furthermore, futuristic objects were added to the site by in-house creators, creating a chaotic restaurant that could not possibly be the real Saizeriya but rather a “Cyber Saize.” In fact, with this augmented reality (AR), you can turn any place on earth into a Saizeriya. In the Cyber Saize, food is still available for take-out and delivery, but the new experience of “summoning the store itself” will push humanity to the next level.

However, Team E found that some tasks did not work out so well.

Team E’s original plan was to get outside users to help them photogrammetrically capture the entire menu. To that end, the team even created an upload form using Google Forms in Google Drive, including terms and conditions regarding uploaded data. However, Team E found it difficult to get people outside of the company to go to the trouble of performing photogrammetry. As a result, they were disappointed to have zero contributors, and in the end, only the team members did the photogrammetry.

Regardless, view “Cyber Saize” below!

リアルメタバースにサイゼを出店しました!!

これでどこでもサイゼを体験できます!!!

もうデリバリーなんて古い!とりあえずいいねとRTを押せばいいのです!#STYLYハッカソン #サイゼリヤ https://t.co/inY1DR8Xh4 pic.twitter.com/kURLkDsppW

— Takanori.I|デザイナー / ディレクター (@takanori_gt) February 16, 2022

Generating 3DCG Models from Photographs

Team E worked individually to perform the photogrammetry, and since the photogrammetry software differed on the team member’s smartphones, Cyber Saize allowed unintentionally for the use of a variety of photogrammetry models.

For reference, here are the menus and the applications used for photogrammetry. This time, Team E worked with Trnio, an easy-to-use, LiDAR-free, 3D-scanner application, as well as MetaScan and Scaniverse, LiDAR-scanning applications.

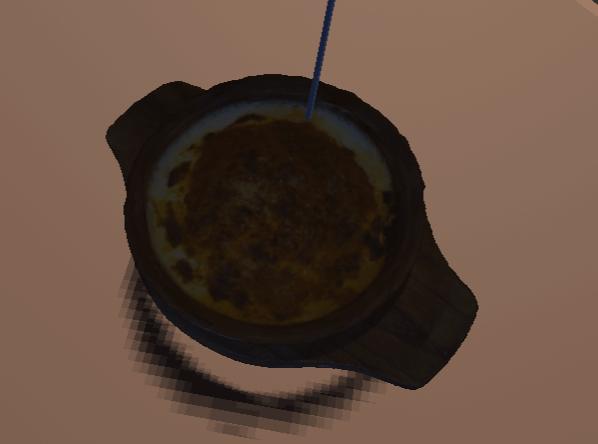

Here is the menu: Peperoncino (Trnio), oven-baked escargot (MetaScan), spaghetti with spinach (MetaScan), focaccia (Trnio), spicy chicken (MetaScan), water (MetaScan), Tiramisu (MetaScan), and rice casserole (MetaScan).

MetaScan: https://metascan.ai/

Trnio: https://www.trnio.com/

Scaniverse: https://scaniverse.com/

As you can see, it is possible to express an image with a fairly high degree of reproducibility.

Team E had previously heard that transparent or reflective objects are difficult to photogrammetrically reproduce, but when they used a glass cup filled with water from a Saizeriya restaurant as a subject, they were surprised to find it reproduced beautifully with photogrammetrically.

The use of each photogrammetric application on STYLY is summarized here:

Finding the Optimal Solution for the Balance Between Size Capacity and Appearance

One team member ordered at Saizeriya after using photogrammetry to create an exterior wall in Scaniverse. However, when he tried to use photogrammetry for the food in Scaniverse, he could not create a very good 3D model, so he used the Trnio application instead. Thus, 3D scanning (photogrammetry) may work well for generating a large area, such as an exterior wall, but it may not work so well for creating small objects.

In addition, the team found that there were some differences among the software applications, regarding the model outputs created with photogrammetry. For example, with MetaScan, the quality can be changed at the time of output, so the output can be of medium quality. However, the model outputs by Trnio tend to have a larger number of polygons and a larger capacity. This makes it difficult to edit because the capacity increases with each additional model.

To solve this problem, the team adopted a policy of reducing the size of each 3D model individually. For small dishes especially, they reduced the polygon count itself and kept the texture size to 1K. Since small objects like dishes are relatively little in a 3D space, their coarseness is not very noticeable, even with size reduction.

On the other hand, for large objects, such as desks, the team reduced the polygon count but did not lower the texture resolution. This is because, if the texture resolution of large objects is reduced, the coarseness of the resolution will be noticeable. For example, the exterior wall of the Saizeriya is a model made completely with photogrammetry. By reducing the polygon count while retaining texture resolution, the team was able to adjust the degree of model reduction according to the size of the 3D model.

Solving the “Textures Appear Dark” Problem

Solving the “Textures Appear Dark” Problem

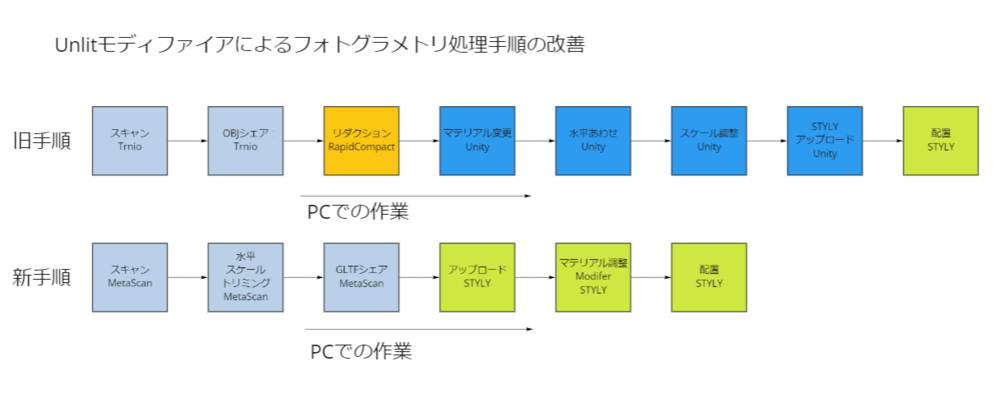

Team E encountered another problem while using photogrammetric models in STYLY. They found that “textures appear dark.” This happens because the default material used in STYLY Studio is incompatible with some photogrammetric models. In the past, the solution was to import the 3D model into Unity, apply the Unlit shader to the material, and upload the object. This time, however, the team tried the Modifier function to see if they could solve the dark textures problem.

Thus, STYLY created the UnlitMaterialModifier to address the problem of dark textures. Using this modifier, it is possible to apply the Unlit shader to the target model while preserving the texture.

By applying the UnlitMaterialModifier, the data output from the 3D-scanning application can be uploaded directly into STYLY Studio and still look like the original image. The team found that this eliminates some steps and makes importing photogrammetric models easier. (Note: this modifier is not yet released to the public.)

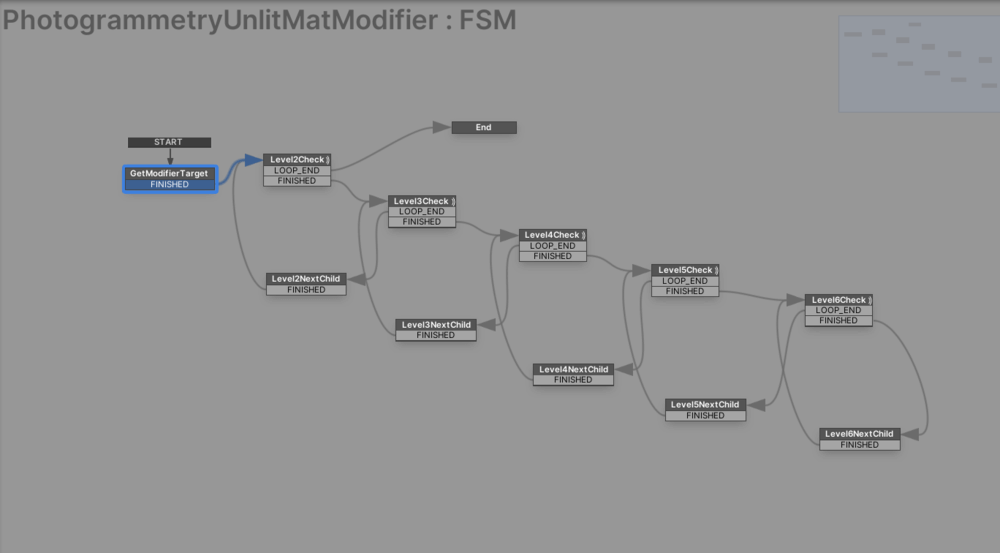

To implement the UnlitMaterialModifier, PlayMaker will need to write a process that traverses all GameObjects in the GameObject tree. The current implementation process was a bit time consuming and redundant, producing as many copies of the process as there are depths to search. STYLY will strive to develop a smarter implementation when we create the release version.

Reflections

To be honest, we felt that they were not able to come up with any ideas at all, and they started the Hackathon project with a lot of anxiety. However, we found it was quite an interesting experience to see the quality of the photogrammetric models improve, as we gathered the models and increased their numbers. As a result, we ended up with an idea that they didn’t really understand, but we felt we were able to present a new concept. I was also happy to receive more responses on Twitter than expected.

The experience of entering a store with an AR display that is the same size as a real store is quite impressive; give it a try! On the practical side, We were able to create a prototype function that allows models photographed with photogrammetry to be easily optimized in STYLY.

We believe that Cyber Saize will lead to the creation of many models using photogrammetry in STYLY in the future. We can hardly wait for the implementation of UnlitMaterialModifier, which solves the “textures appear dark problem” without using Unity.

This was a five-part article on the STYLY Hackathon. The Hackathon was a project that inspired many ideas for various methods of expression and their implementation.

![[iPhone14 Pro / iPadPro 2021] Using Forge to create a 3D model scene](https://styly.cc/wp-content/uploads/2021/04/forge1-160x160.png)

![[iPad / iPhone] How to use Trnio, a 3D scanning app with photogrammetry and ARKit support](https://styly.cc/wp-content/uploads/2019/06/スクリーンショット-2019-06-03-15.03.49-160x160.png)

![[iPad / iPhone Pro Series] How to easily create 3D models with the free 3D scanning app “Scaniverse”.](https://styly.cc/wp-content/uploads/2021/08/eyecatch-1-160x160.png)